Software Engineer

NVIDIA

Hi!

I’m a Software Engineer working on Deep Learning Libraries at NVIDIA.

I completed my MS in CS at Georgia Tech, where I was advised by Prof. Alexey Tumanov in the Systems for Artificial Intelligence Lab (SAIL), working on making machine learning applications, tools, and algorithms cheaper and accessible.

In Summer 2020, I interned at Nvidia with the TensorRT team, optimizing systems for deep-learning inference on GPUs.

Prior to starting at Georgia Tech, I was an ML Software Engineer at Samsung India R&D, where I helped bring flagship vision applications to low-power devices through the

Samsung Neural SDK.

I completed my Bachelor’s degree in Math & Computing at Delhi Technological University.

News

- Jul 2021: Joined NVIDIA’s as a Software Engineer on the Deep Learning Libraries team

- May 2021: Completed my MS in CS (Machine Learning) from Georgia Tech

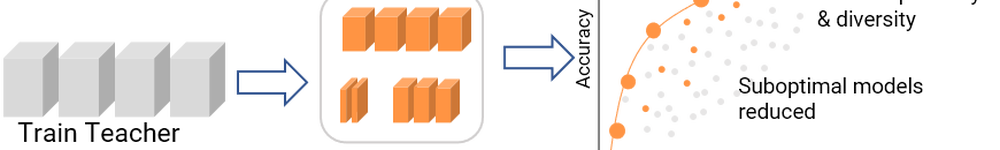

- Jan 2021: Our paper was accepted to ICLR 2021! CompOFA: Compound Once-For-All Networks for Faster Multi-Platform Deployment

- Jan 2021: I will be serving as Head TA for CS7643 Deep Learning in Spring 2021

Interests

- Systems for Deep Learning

- Neural Architecture Search

- Computer Vision

- High-Performance Computing

Education

-

M.S. in Computer Science, 2019-2021

Georgia Institute of Technology

-

B.Tech. in Mathematics & Computing, 2013-2017

Delhi Technological University